Explaining how mistakes are made using Swiss cheese and a lawnmower

A unique introduction to human error

This is the third article in the Psyverse #2 series. To read the two previous ones, see the compendium.

There is no getting around the fact that health and safety is a very boring subject.

This was made clear to me when I volunteered to give an induction talk to new PhD students at the start of my third year. I arrived early, in the middle of the health and safety officer’s talk. I watched from the back of the room as he heroically tackled a plethora of health and safety directives. He spoke passionately about who to talk to in case something went wrong, where information on safe handling of equipment could be found, and empathetically reassured the onlooking students that everyone makes mistakes – it is far better to find help when things go wrong than try to fix things on one’s own.

It was a thoroughly impressive talk by a thoroughly impressive speaker. “Gosh, how am I going to follow that?” I remember thinking as I walked nervously up to the front.

When I turned to face the sea of eyes, I immediately understood my miscalculation. I had never seen a more bored audience in my life. Out of the sullen faces, heads on desks and emotionally vacant expressions, one student was faced 90 degrees away from me, staring directly at a newly painted wall. “How am I going to follow that?” I thought a second time. I tried my best to energise and inspire the new cohort of PhD students, but all I managed was a quiet titter of laughter halfway through.

“That was brilliant Alex!” the postgraduate secretary commented, rushing up to greet me after, “I’ve never seen the new students laugh after the health and safety talk”. This might be the highest praise I have ever received.

Talking about health and safety is inevitably boring. But that doesn’t mean that doing health and safety should be boring. So rather than try to persuade you that the mind-numbingly long documents of guidelines and strategies and directives are somehow secretly sexy, or lecture you on the life-saving importance of health and safety rules that you all must follow to a tee, I’m going to argue that when health and safety is done well, you don’t see it as health and safety. It is a set of systems and culture that are part of the everyday machinations of one’s job.

Since health and safety applies to all jobs, from postal workers to gardeners to healthcare professionals, the theory behind this set of systems and culture is generalisable. So I hope you will join me in this exploration of Human factors, the subject concerning health and safety at work.1

Part of a good health and safety system/culture is knowing what to do when a mistake occurs. How do we rectify it? To answer this question, I need to talk about a lawn mower and Swiss cheese.

Four for the sides, please

Let’s say an employee named “Axel” has been hired by a lawnmower company as a door-to-door salesman. The company has just released a brand-new lawnmower. Axel goes around neighbourhoods looking for unkempt lawns to demonstrate the mighty power of the cutter 3000. Lo and behold, Axel finds just the lawn. And not only have the occupiers not thrown him off their property immediately, they’ve agreed for Axel to show them how the mower works.

As Axel is setting up the lawnmower, the occupiers ask him to set it on the highest setting. “No problemo!” Axel confidently replies, as he puts his hands on the push bar, strides confidently down the first horizontal line of lawn, and…

Axel has only gone and buzz-cutted a bald line into the turf! He slowly turns his head to see the open-mouthed expressions of the couple that so keenly invited him to demonstrate the cutter-3000. It ends how most sales attempts end. Axel gets chucked out by the occupiers.

Now, in this circumstance (which definitely did not happen with me and my own lawn), the primal urge is to blame.2 The mistake was Axel’s fault. In the context of a group of people, like in an organisation, this is what is called a blame culture. When someone makes a mistake, often the reaction is to find a small number of people who are responsible (who are then punished), drawing attention away from possible systemic issues. From The design of everyday things3 by Don Norman:

Humans err continually; it is an intrinsic part of our nature. System design should take this into account. Pinning the blame on the person may be a comfortable way to proceed, but why was the system ever designed so that a single act by a single person could cause calamity? Worse, blaming the person without fixing the root, underlying cause does not fix the problem: the same error is likely to be repeated by someone else.

Rather than immediately throwing Axel out, thus leaving a non-symmetric sideburnless garden and the lack of what is otherwise an excellent lawnmower, the friendly couple could have asked Axel what went wrong. Together they could try to piece together how the mistake was made, to understand how it could be prevented in future. This is the opposite4 of a blame culture – a learning culture (sometimes known as a “supporting culture” or “no-blame” culture).

In communication with the lawnmower company, changes could be made to the procedure or design of the cutter 3000. Ensuring no lawn will feel naked again. If the company keeps finding that Axel seems to have a pathological obsession with removing as much grass as possible, while other salesmen have stopped buzz-cutting grass, the company could ask Axel further questions. If he were found to have made the mistake on purpose,5 disciplinary procedures could be undertaken. This is a “just culture”, which lies between a blame and a learning culture.6 While learning is the ultimate aim, individuals have responsibility over their actions and can be sent for further training or disciplined if found to be engaging in risky behaviour deliberately.

Let us say that Axel made an honest mistake. How could we learn from it?

Slicing Swiss Cheese

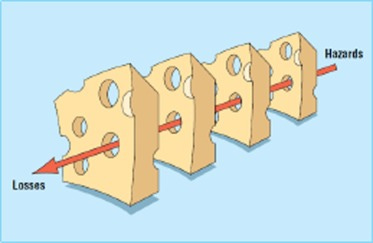

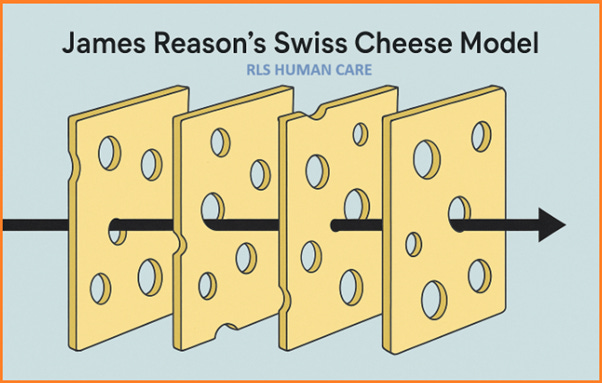

First, we need a model of how mistakes occur within an organisational system. Fortunately, a British Psychologist called James Reason came up with such a model in the late 1980s. He imagined a series of layers representing defences and safeguards within a system to guard against hazards.7 Rather than being perfect, these defences have holes in them. Rob Lee, Director of the Bureau of Air Safety Investigation in Canberra at the time, suggested to Reason that the sequence of layers with holes looked a lot like Swiss cheese. Reason took this idea and ran with it, publishing a figure utilising Swiss cheese in his 2000 article Human error: models and management. And thus, the Swiss cheese model was born.

So, as you can see from the Swiss cheese figure above, when anyone makes even the tiniest mistake, everyone dies.

Wait…

That isn’t right…

Can we look at the Swiss cheese figure again?

James Reason has only gone and made an error in a paper about how errors are made! You cannot just copy and paste the same slice of Swiss cheese!8 It seems like human laziness has triumphed once again.

You see how easy it is to blame?

So, let us use the Swiss cheese model to help us understand how James Reason, the creator of the Swiss cheese model, made a mistake while trying to communicate the Swiss cheese model.

Firstly, we need to understand what the Swiss cheese model is actually supposed to represent.

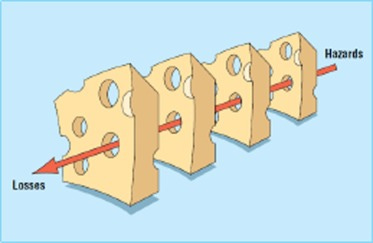

The layers of cheese are defences against a source of harm (hazard), so that an event that causes harm to a person (loss) doesn’t occur. These can range from organisational influences to environmental and psychological conditions. The holes are gaps in these defences (e.g. toxic work culture, budget constraints, bugs in code, weakness in a safety barrier, etc.).9

The layers of cheese are not identical in nature. Together, they represent a system of defences as a whole, but each individual layer represents a different part of the system. In other words, the holes won’t be in the same places. The layers form a hierarchy, with the start of the arrow going through the “blunt end”, and the head of the arrow through the “sharp end”.

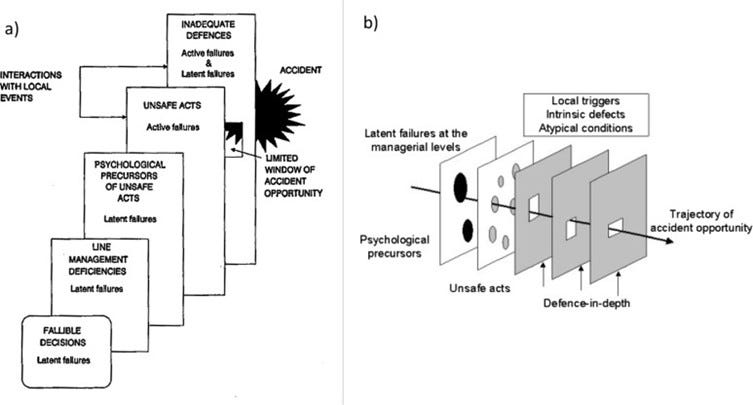

The blunt end is usually remote from any accident (or loss) in both time and space. The slices of cheese here represent senior decision makers, managers, the design of the system procedures, and organisational culture. The failures, or holes in the Swiss cheese at this end, are “latent conditions”. From Human error: models and management by James Reason:

[Latent conditions] arise from decisions made by designers, builders, procedure writers, and top level management…. Latent conditions have two kinds of adverse effect: they can translate into error provoking conditions within the local workplace (for example, time pressure, understaffing, inadequate equipment, fatigue, and inexperience) and they can create longlasting holes or weaknesses in the defences (untrustworthy alarms and indicators, unworkable procedures, design and construction deficiencies, etc). Latent conditions—as the term suggests—may lie dormant within the system for many years before they combine with active failures and local triggers to create an accident opportunity.

The sharp end is where frontline workers10 are in direct contact with the system environment, whether that be another person (e.g. a patient), a machine (e.g. an aeroplane), or a topology (e.g. a quarry). The slices of cheese here represent the state of the system conditions or the psychological/physical state of the frontline worker. The holes in these slices of cheese are called “active failures”, or “unsafe acts”. These are slips, lapses, fumbles, mistakes and procedural violations.11

Note that the holes in the Swiss cheese represent both active failures and latent conditions. Generally, latent conditions are higher up the hierarchy, nearer the hazard, with active failures lower down. Early versions of the Swiss cheese model had distinct explicit representations for each layer. But given the heterogeneity12 of health and safety systems, the layers were eventually left unnamed so that different systems could be labelled in the way that suited them best.13

Importantly, as James Reason notes in the very paper he published the misleading Swiss cheese figure in:

[Unlike in Swiss cheese the] holes are continually opening, shutting, and shifting their location. The presence of holes in any one “slice” does not normally cause a bad outcome. Usually this can happen only when the holes in many layers momentarily line up to permit a trajectory of accident opportunity—bringing hazards into damaging contact with victims.

So, the Swiss cheese figure is supposed to represent a snapshot in time when all the holes manage to line up. Perhaps a more accurate Swiss Cheese Model representation would be this figure:

Each of the layers has holes in different places and different sizes. The arrow touches at least one of the sides of the holes it passes through, which helps convey the momentary nature of the holes lining up. Finally, the arrow passes through a very tiny hole in one of the layers to convey that the hole has just opened up.14

So, back to Reason’s Swiss cheese figure from earlier. The “unsafe acts” that Reason made were copying and pasting the same Swiss Cheese and drawing an arrow directly through the middle of the holes.15 To get to the bottom of the latent conditions, a root cause analysis would need to take place. This is where an investigation of the “unsafe act” and the events leading up to it attempts to determine where the holes in the system were. In lieu of a root cause analysis, we can guess that some of the latent conditions were: the likely clunky software at the time of publishing (2000s), the publishing checks, and the peer review system.16

Swiss Cheesing the lawnmower

With our understanding of the Swiss cheese model in mind, let us look back at the lawnmower, and my active failures… No wait! Axel’s active failures…

Ack. I cannot keep up this ingenious facade any longer. Alas, it was me… I embarrassingly buzz-cut my own lawn.

With that out of the way, my first active failure was that I didn’t read the manual fully. I didn’t learn the ins and outs of how the lawnmower worked.

Then, I set the height of the lawnmower without double-checking it was correct. Finally, I didn’t test out the lawnmower on a very small inconsequential patch of grass, much like what one would do when testing paint colour.

The lawnmower company could give out directives for owners to follow as a slip of paper in the box the lawnmower came in. However, this would be like a band-aid (or “plaster” if British) over a continuously cracking system. Wiegmann et al. (2022) illustrate the shortcomings of such local fixes through a classic Dutch childhood story. A young boy named Hans uses his fingers to plug holes in a dam near his village, saving the inhabitants from flooding.

While such corrective actions are needed, and should be implemented, they often do not address the underlying causes of the problem (i.e., the reasons for the leak in the dam). It is just a matter of time before the next hole opens up. But what happens when little Hans ultimately runs out of fingers and toes to plug up the new holes that will eventually occur? Clearly, there is a need for the development other solutions that are implemented upstream from the village and dam. Perhaps the water upstream can be rerouted, or other dams put in place. Whatever intervention we come up with, it needs to reduce the pressure on the dam so that other holes don’t emerge.

In other words, we need to address the latent conditions in the system so that new holes don’t occur, or even better, so that Hans doesn’t need to put his fingers in the dam holes17 in the first place.18

What were the latent conditions in my lawnmower escapade? To find out, we need to do an investigation.

First of all, how did my buzz-cut mistake occur? Well, I set up the lawnmower by unfolding the push bar, putting in the battery, and plugging in the safety key. Then I looked at the tag attached to the push bar to check if the lawnmower was set at the right height. According to the label, the lawnmower was originally set to “low”, and I wanted it on “high”, so I reached down from my standing position behind the lawnmower and pulled the red handle to the “high” position. I made no question of this. The handle being up on the lawnmower, to indicate the high setting, was intuitive to me.

Then, I turned the lawnmower on, walked forward, and made my lawn feel embarrassed for a couple of weeks.

So, what on earth happened? Well. When you take a look at the side of the lawnmower, the answer reveals itself.

Pulling the red lever back lowered the lawnmower, not raised it! Since I pulled the lever back while standing behind the lawnmower, the height indicators were obscured from view. Similarly, while standing, I could not easily see that the lawnmower had lowered down. And since from my position, everything made intuitive sense, my brain was not on the lookout for things to go wrong – I was focused on pushing the lawnmower to mow the grass.

My guess is the tag was intended to be viewed in the folded position of the lawnmower handle, when it was close to the lever. In other words, the tag was supposed to be viewed while facing the front of the lawnmower instead of standing behind it.19

Possible design solutions for these latent conditions20 could be to change the inner mechanism of the lawnmower lever so that the higher up position corresponds to the lawnmower being higher. Pictographs of some short grass and long grass could be placed on top of the lawnmower at each corresponding end of the lever “hole” so that when a person reaches down to change the lever, they can confirm it is at the setting they want. The tag on the lawnmower could be attached to the other side of the handle, and perhaps an outline of a lawnmower surrounding the lever diagram could show clearly which end is the front of the lawnmower and which is the back.

Reporting the problem

Now that I have spotted the active failures and latent conditions21 after committing my unsafe act (as well as devised possible solutions to plug some of these holes in the Swiss cheese), how could I communicate it back to the lawnmower company?

I could just send them an email. But I might just get a message back saying “we have read your concerns and passed your feedback on to the relevant person”, knowing full well the administrator on the other end of the email has probably deleted it. However, if the lawnmower company had a form I could fill in, specifically for me to report a mistake, I would be more confident my concerns would be looked at.22

These forms would be part of something called a safety reporting system. Originating from industries like aviation and nuclear, they provide a means for front-line workers to report unsafe acts.

While in my lawnmower scenario, I have personally made the effort to conduct a very informal investigation, in the real world,23 front-line professionals usually don’t have the time, nor would be very happy about, being unpaid investigators. While it is beneficial for staff to be involved as much as possible in every aspect of safety, most of this job would go to an investigator. What we can expect is for front-line professionals to report their mistakes.

Compared to the aviation and nuclear industries, the implementation of reporting systems within healthcare is relatively recent. Mostly occurring within the last twenty-five years or so. It has gone… well… I think it wouldn’t be too controversial to say the jury is out at the moment.

The series continues next Thursday.

If you didn’t find the article too cheesy or full of holes, please consider giving it a heart ❤️.

And if you know someone who would appreciate an unnecessarily thorough analysis of a bald stripe in a lawn, please do restack 🔄 or share 🔗. My lawn and I would be grateful!

The UK’s Health and Safety Executive says that:

Human factors refer to environmental, organisational and job factors, and human and individual characteristics, which influence behaviour at work in a way which can affect health and safety

This could be honest or dishonest, i.e. you could blame other people for mistakes you have made.

More “different” rather than “opposite”. I’ve created a spectrum as an aid for the reader.

This could take the form of Impaired judgement, malicious action, reckless action, risky action or unintentional error (there is still a problem with Axel’s lawnmower that isn’t apparent in other ones). See Boysen (2013) for more details.

Sort of… It isn’t really in the middle of the two but kind of adapts aspects from both. See this definition of a just culture by Reason (1997):

An atmosphere of trust in which people are encouraged, even rewarded, for providing essential safety-related information – but in which they are also clear about where the line must be drawn between acceptable and unacceptable behaviour

I’m using a very broad definition of hazard in this article. Generally, a hazard is defined as “a potential source of harm or adverse health effect on a person or persons” (From the Irish Health and Safety Authority). I suppose you could argue the lawnmower’s height setting is a source of harm to the grass’s self-esteem…

By using the same slice of cheese for each layer, this implies that whenever any mistake is made (represented by any hole), harm is immediately inflicted upon the person who made it. i.e. we always go straight from hazard (potential harm) to loss (harm).

We can use a Trapeze show as an example. The main hazard would be falling from the Trapeze bar, and the loss would be injury as a result of falling. The “defences” may be a long training procedure before being allowed to perform, the quality of the training equipment, a culture which doesn’t pressure performers into performing (e.g. if they have an injury), and a net below the Trapeze performance area to catch any falls. “Holes” could be a packed performance schedule (not allowing performers to recover appropriately), inadequate training equipment, a culture with a “throw into the deep end” attitude to rookies, and literally a hole in the safety net.

The frontline workers could be nurses/doctors with a patient, construction workers on a building site, airline pilots in an aeroplane, to name a few.

Slips are execution failures where a frontline worker has the correct intention, but the action deviates from their plan (e.g. pressing the wrong button).

Lapses are memory failures where a worker forgets an intended action (e.g. forgetting to press a button).

Fumbles are active execution failures. These are slips related to poor physical execution (e.g. pressing the wrong button through miscoordination - liker fir instancew on a keybord).

Mistakes are planning or problem-solving failures where the frontline worker’s action goes as intended, but the plan/intention itself is incorrect (e.g. A button is mislabelled, meaning the wrong button is pressed). This is split further into rule-based mistakes, where a bad rule is applied (or good rule misapplied), and knowledge-based mistakes, where a worker finds themselves in a completely novel situation - of which no rule exists - and incorrect reasoning occurs.

Procedure Violations are a deliberate deviation from a rule, procedure, standard or safe operating practice (e.g. a worker presses the wrong button on purpose). These intentional actions are split further into routine (common practice despite being unsafe), exceptional (unusual deliberate departures from procedure) and optimising (cutting corners) violations. It is important to note that procedure violations are rarely malicious and usually result from a worker attempting to get a job done as efficiently as possible.

For more on types of active failures, see Human Error by Reason or this short explainer from the UK’s Health and Safety Executive.

I don’t quite know why, but homogenous and heterogenous are two words my brain continually stumbles on, meaning-wise. So, for readers who also encounter this problem:

Homogeneity means that parts of a system are all similar to each other. Whereas Heterogeneity means that parts of a system are all different from each other.

They are not absolute terms; it is a spectrum, and the two words are used to describe which end of the spectrum a system lies.

As an example, the Human Factors Analysis and Classification System for Healthcare (HFACS) system.

As a final note on the Swiss cheese model, it is meant to be a heuristic to help a general audience understand how organisations could become safer. Safety science has to encompass all the factors involved in the complex interaction of people with other people, as well as people with the environment. It is probably fair to say that nothing in safety science is simple.

Many criticisms of the Swiss cheese model fail to take into account other, more detailed versions of the heuristic Reason produced, such as:

I recommend the excellent article by Larouzee and Le Coze (2020) for an extensive look at the history of the Swiss cheese model.

The hazard in this case is that the readers misinterpret what the Swiss cheese model is supposed to mean (Just search “Swiss cheese model” in Google images for an array of interpretations).

The image processing software would have been much less advanced than today, meaning the creation of different sizes and shapes of cheese would be more difficult. Or perhaps Clippy didn’t remind Reason about the mistake...

There may be systemic issues in the structure of the editorial board (for instance, not being paid for their time, introducing time pressures)

While it would be a totally Alex move to tangent into an analysis of the peer review system, I think this article would end up going on forever…

Works both ways

Wiegman et al. (2022) goes on to clarify:

This is not to say that directly plugging up an active failure is unimportant. On the contrary, when an active failure is identified, action should be taken to directly address the hazard. However, sometimes such fixes are seen as “system fixes” particularly when they can be easily applied and spread.

A hint that this was supposed to be viewed from the front comes from the orientation of the lever “notches” in the tag image. Which only align when viewed from the front of the lawnmower.

Though this still doesn’t explain why the label is on the wrong side of the lawnmower.

If going by the HFACS system, these would be “Preconditions for Unsafe Acts”

Well, not quite…

Without knowing anything about the structure of the lawnmower company itself, the organisational & supervisory latent conditions are beyond our grasp. Possible places to look for them would be the procedures for user testing, organisational trade-offs (cost/time), etc.

The form could just be an empty box with the question “What happened?”, but if you are a large organisation receiving hundreds of these forms a week from something as small as “I input Mr Jenkins’ name as Mr Yenkins” on an invoice to “I accidentally amputated a toe with the lawnmower”, sorting through this information would be quite difficult. A structured report would be a better way to go.